New Material

Monday 6 January 2025

For the past five years wind and solar have done an exceptional job of suppressing the (net) growth of fossil fuels in US power generation. However, the risk that’s now emerging—given the race to source new power demand to support the growth of AI—is that natural gas growth, already a problem, does a hard acceleration. Why might that happen? Two reasons. The bulk of global data center capacity growth is going to be concentrated in the US, at least in the near term. And also, the volume of new power generation that the commercial technology sector is going to demand may smooth out eventually, but at the front end will be sudden. We are at the front end right now. This raises the likelihood that on-site natural gas generation becomes the go-to solution for the industry. Think: mini, dedicated natural gas turbine arrays that potentially sit behind-the-meter (BTM) and thus avoid the entire traditional process by which new, standalone on-grid powerplants are built. Indeed, big tech is about to join the list of justifiably frustrated interests in the US—wind and solar developers especially—who are ready to expand the grid, but cannot do so because of legacy regulation.

As you can see in the chart below, energy sources in the US electricity system ex wind+solar have been held in check for five years now at roughly 3600 TWh, starting in 2020 and running through 2024. That’s a great demonstration of wind and solar’s rapid growth, but also the hard problem of getting legacy generation to actually decline. This risk presented now, however, is that wind+solar may not be able to respond as quickly to sudden demand growth. If that happens, net generation from fossil fuels in US power will actually start increasing again.

The terms of this newly arising dilemma are becoming clear(er) as a number of very good analysts are stepping up to the plate to provide guidance. For example, if the tech industry is going to build on-site generation, why not choose instead wind and solar and batteries, with a small amount of natural gas as back-up? That was the question answered by a consortium of researchers last month, publishing at the URL of offgrid.ai, who found that off-grid solar microgrids would not just be economic to deploy, but could meet the speed the industry requires. Notably, this research got push-back from some quarters for even daring to raise the prospect that natgas would be paired to such systems. But the tech industry is going to preference reliability and security, so we should be so lucky if solar with natgas and battery backup emerges as the common solution. Just to remind: Germany’s long term national energy strategy is to have a modern grid that’s mainly powered by renewables but nevertheless has a good chunk of natgas back up. That’s a good benchmark.

Meanwhile, there is legitimate uncertainty about high-case projections for total power demand given the historical pattern in semiconductor manufacturing that sees processing power increase as energy requirements fall. This is a tricky set of factors to weigh, of course, because if a domain such as the US experiences rapid volume growth in data center capacity, that could overwhelm, in the short-term, future gains to computational efficiency. On this question, Micheal Liebreich has juggled a basket of uncertainties and has produced not a definitive answer, but an enormously helpful map of the terrain, if you will, and you should read his essay The Power and the Glory. There's alot of position-taking right now on the emerging AI/powergrid landscape that's overly strong and premature. Liebreich’s essay respects the competing concerns, and offers an excellent platform for how to think about the problem as we head into a very active year for global AI growth.

Working with an existing materials science lab, an MIT researcher discovered that the introduction of an AI tool significantly raised the lab’s productivity. From the recent story in the Wall Street Journal:

The lab that Toner-Rodgers studied randomly assigned teams of researchers to start using the tool in three waves, starting in May 2022. After Toner-Rodgers approached the lab, it agreed to work with him but didn’t want its identity disclosed.

What Toner-Rodgers found was striking: After the tool was implemented, researchers discovered 44% more materials, their patent filings rose by 39% and there was a 17% increase in new product prototypes. Contrary to concerns that using AI for scientific research might lead to a “streetlight effect”—hitting on the most obvious solutions rather than the best ones—there were more novel compounds than what the scientists discovered before using AI.

Toner-Rodgers was a bit surprised himself. He had thought at best it would have just kept up with the scientists on novel discoveries. “You could have come up with a bunch of lame materials that are not actually helpful,” he said.

Cold Eye Earth takes the view that humanity doesn’t have the option to “take a pass” on AI, even if the AI buildout places more pressure on the already hard problem of lowering emissions. Like a call option, the payoff to AI is asymmetrical. Wildly asymmetrical. If, in the early days of large language model iteration, we are already realizing verified gains in material science then the prospect that much larger scientific gains can be made merits consideration. We remain not just far away from decarbonizing the grid, but have only made tiny progress in the world’s hard-to-abate industrial sectors. There’s a whole universe of discovery that could be opened up by AI.

And again, unless you are keeping up on the advancements, this will surely seem like wishful thinking. For example, OpenAI recently demonstrated, but did not release, its successor to o1, called OpenAi o3. The ability of o3 to tackle extremely hard and idiosyncratic math problems is eye-opening. You can review the presentation of those capabilities here at the OpenAi You Tube channel.

One of the unexpected outcomes from the MIT research cited above was the discovery of the emotional effect on humans in the materials science lab, in the aftermath of AI-aided advances. Respondents voiced their feeling of unease if not bewilderment of AI’s ability to do some portion of their jobs. One professional was struck with the realization that all their education might now be reduced, in value. Everyone tempted to still believe that AI is just a bubble, won’t amount to much, or is not even real, should spend a few moments to consider this final flourish from the WSJ article:

While many AI optimists believe the technology will reduce the number of tedious tasks people have to perform, the scientists felt that it took away the part of their jobs—dreaming up new compounds—they enjoyed most. One scientist remarked, “I couldn’t help feeling that much of my education is now worthless.”

Further reading: Artificial Intelligence, Scientific Discovery, and Product Innovation, Toner-Rodgers, 2004.

In Nicholas Roeg’s 1976 film, The Man Who Fell to Earth, David Bowie plays an alien who survives his time on earth by formulating and selling breakthrough discoveries in chemicals and materials. You can see where this is going! Here in the 21st century humanity sits on a veritable mountain range of discoveries and knowledge that’s accumulated over time, mainly the past 500 years. So far, AI is operating like a supremely talented student that’s aggregated a portion of that knowledge, and is now beginning to generate solutions to hard problems. We are either just a few steps away, or even a few steps already taken, towards more significant breakthroughs.

As a general example, many readers may be aware that a number of STEM disciplines make progress in the modern era through mathematics. If a scientist, say an astrophysicist, now uses math to help solve problems that are hard to test, then AI offers the promise to perform the kinds of breathtaking work done by Newton, and Einstein. Indeed, Geoffrey Hinton, Nobel prize winner, and “the godfather” of AI, recently said that large language models and other emergent AI are, to him, “the best models we have for how humans think.” Some don’t agree. But at least consider Hinton’s point. For him, the breakthroughs that finally arrived in AI were the result of massive scaling: once we accumulated enough computing power, we finally got a system that started to approach some of the output of which the brain is capable. In other words, Hinton wants us to consider that despite our self-regard, and what we know as human creativity, passion and drive, our brains largely work because of similar scaling (which was achieved through evolution).

Cold Eye Earth suggests the following proposition: if you pare back the wildest fantasy of what AI may be capable of in the next 25 years by 95%, you are likely still in possession of an intelligence that could produce breakthroughs of the kind we were given by Newton, Einstein, Curie, Mandelbrot, Euler, Ramanujan, Rutherford, Turing, Plank, Feynman and so many more not named here. OK, one more, a personal favorite: Darwin.

• Breaking: As Cold Eye Earth goes to press on Sunday night here in the US, Sam Altman of OpenAI has just released a blog post which has the internet abuzz—and with good reason. There have been rumors all week that OpenAI was either near a breakthrough, or, believed they were near a breakthrough. Tonight, Altman wrote the following:

We are now confident we know how to build AGI as we have traditionally understood it. We believe that, in 2025, we may see the first AI agents “join the workforce” and materially change the output of companies.

Natural gas is close to overtaking oil as the top energy source in the United States. While this is not revolutionary, it’s useful to consider the most important factor in this change is that US oil consumption has not advanced or fallen much in the past twenty years. So, while the country’s economy and population expanded, its oil demand oscillated. Meanwhile, natural gas exploited the rapid decline of coal, and also the inability of US wind and solar to entirely fill the coal gap on their own. Just to remind, the other reality that makes natural gas so dangerous is its extremely low cost. For those new to the letter, please see the last issue, Revenge of the Fossil Fuels, on this very point.

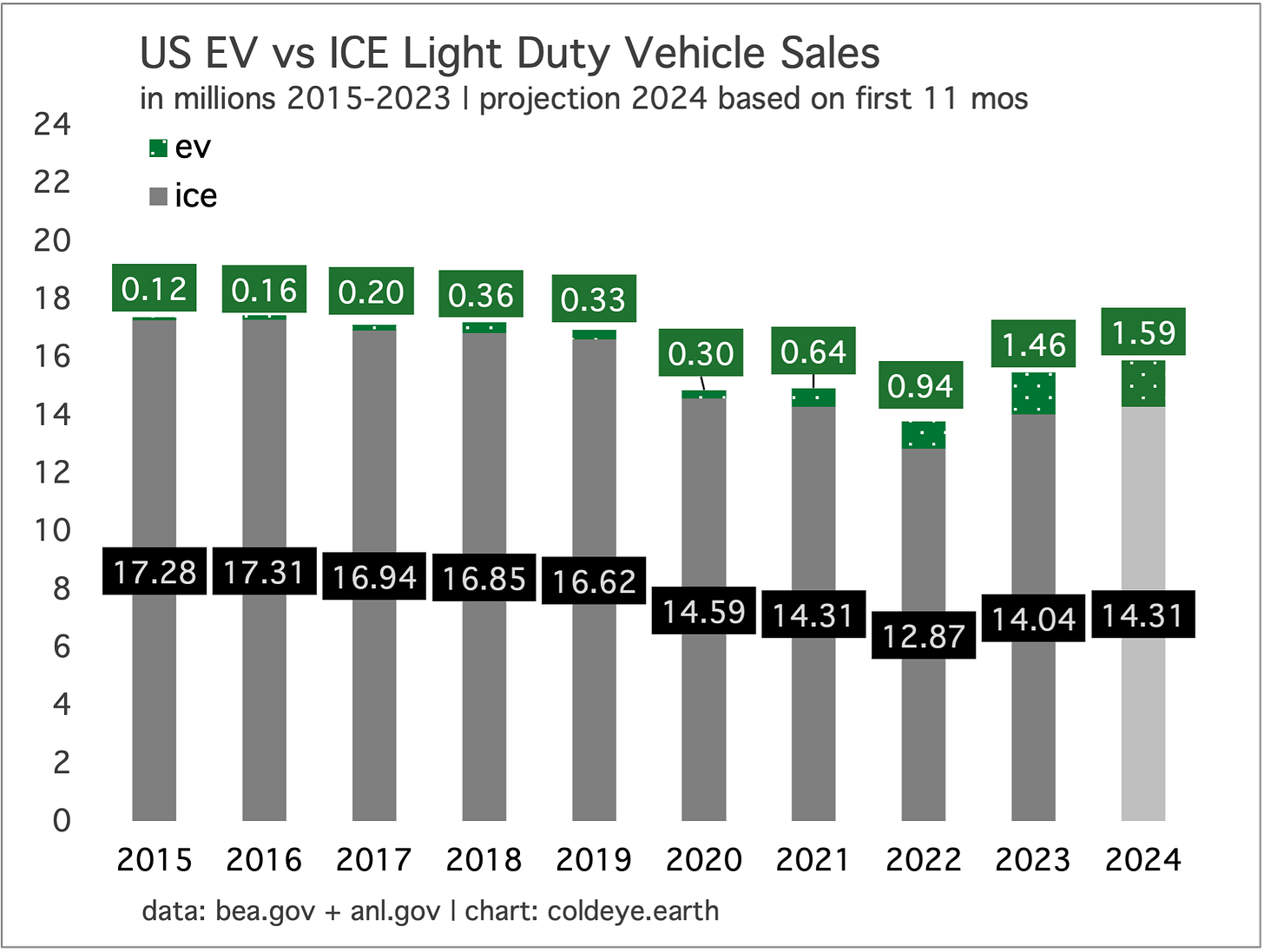

Over a decade after electric vehicles entered broad availability in the US, they have now reached 10% market share. That does not adhere to the fast adoption seen across a number of consumer purchases over the past century, and must be regarded as a glacial pace of uptake. To be sure, a car is more expensive than most major appliances but I think everyone can agree you will surely not decarbonize transportation at such rates. (Contrast this with EV in China, which in 2024 started to see EV sales reach and surpass 50% market share.)

The US electric vehicle market of course faces new challenges. The Trump administration does not need Congress to change regulations around vehicles and we have known for some time they would likely lower or erase tax incentives to purchase new EV. So, 2025 is not likely to be a great year for electric vehicles.

One note of optimism is worthy of consideration, however. New technology that benefits from subsidies must eventually cross the moat and face markets, standing on their own. There may be unexpected outcomes that arrive not this year, but perhaps in the next few years, which indicate that the cancellation of subsidies may make the EV market stronger. Once that happens, nothing short of outright EV bans would be able to stop EV growth, because as we know EV lifetime value to the owner is already pretty attractive.

—Gregor Macdonald